Types of Propaganda, Propaganda Techniques, and Propaganda Strategies

Social Influence Tactics and How to Counter Them

We present a list of types of propaganda, propaganda techniques, and propaganda strategies used to manipulate public opinion in the modern day.[1][2][3][4]

In other words, we present a list of social influence tactics used in everything from online trolling, to slanted reporting on TV, to print advertisements.no

Furthermore, and perhaps more to the point, we explain how to safeguard yourself and others against negative messaging and how to use counter-arguments to “flip the script” on propagandists.

The main goal here will be to clue you in on the history of propaganda, the nature of propaganda, and to what is happening here in 2017 (with a specific focus on the online space) so you can avoid being indoctrinated, can help educate others, and can help combat negative influencers.

First some Notes:

- In general, propaganda is about emotion, not facts. It uses a type of logic, but that logic is based on fallacy and emotion, rather than formal sound and cogent logic. Thus, understanding propaganda to guard yourself against it requires not only the use of sound and cogent logic and a set of facts, it requires being on guard against emotional responses.

- Below we try to use examples from many different countries and entities (left, right, center, Hitler, and Russia), mixed with a dash of humor. We apologize if our video choice or example choice is coming off as biased. It is hard to write about bias without getting dark or stepping on ideological bubbles; thanks in advance for bearing with us!

- In case the above comment “triggered” you (in case you had an adverse reaction to terms like Hitler, Russia, left, or right), now is probably a good time to bring up the backfire effect and confirmation bias. The backfire effect describes when a person reacts to disconfirming evidence by strengthening their previous beliefs. This and a “thousand” other cognitive biases make change, resistance, and adaptation a complex subject, and make the fallacious reasoning and emotional appeals a propagandist uses all that more effective. To dig into the world of propaganda, it helps to (forgive the metaphor) “turn off the backfire effect mechanism on your bubble filter.” In real life we might want to appeal to people with emotion ourselves to avoid getting shut out due to cognitive dissonance, but for a facts page on propaganda, it makes sense to skip the white-hat tactics and try to just tell it like it is.

What is Propaganda? What are Propaganda Techniques? What are Propaganda Strategies?

Propaganda is information (delivered through any medium) designed to persuade, manipulate emotion, and change opinion rather than to inform using logical truths and facts. The aim of propaganda is to change minds via the use of emotion, misinformation, disinformation, truths, half-truths, and cleverly selected facts; not to enlighten (although one can technically propagandize true information, using emotion to sell truth, this generally isn’t what we are talking about when we use the term “propaganda”). Examples of propaganda include a War-Time poster that promises “Only X ideology has the answer” or “an ad on TV that tries to connect with you emotionally to sell a drug.”

Positive and Negative Propaganda: Propaganda isn’t bad by its nature (after all, almost any content that relays information can be considered a form of propaganda). Consider, rhetoric has been long studied as the art of effective persuasion in speech. Shaping perception and influencing can be done in a moral, immoral, or amoral way, either for the benefit of the one, few, or many, either for a special interest or the general interest. Thus, how we should view propaganda depends on the intentions, specific tactics used, and context. On this page, we focus on combating and calling out “negative propaganda” that uses black-hat and grey-hat methods (as opposed to white-hat). Let’s call the bad kind “negative” and the good kind “positive.” If we don’t denote the type, look to the context in which the term is used.

Propaganda Medium types can include news, talk-show segments, advertising, public-service announcements, advertisements, books, leaflets, web pages, comments online, speeches, posters, etc. Any audio/visual medium can act as a platform for propaganda. As Edward Bernays, father of modern propaganda says, “THE media by which special pleaders transmit their messages to the public through propaganda include all the means by which people today transmit their ideas to one another. There is no means of human communication which may not also be a means of deliberate propaganda because propaganda is simply the establishing of reciprocal understanding between an individual and a group.”

TIP: Technically all advertising and PR is propaganda, but generally when people are concerned about propaganda they are concerned about political propaganda meant to manipulate opinion (often in a way that doesn’t align with the actual self-interest of the person being manipulated).

On Manufacturing Consent and Shaping Public Opinion: Propaganda is looking to change your mind, logically there are only so many things it is really going to try to do at its core. It is either trying to get you to consent to something or to dissent. Propaganda either wants you to have a negative emotional response to something or a positive one. Rarely is it simply trying to give you useful information without a motive. Complex and nuanced information is very hard to propagandize, as many propaganda techniques require simple slogans, simple emotions, and simple calls to action.

TIP: To sum up Noam Chomsky’s Manufacturing Consent, in America (speaking very broadly) most of our mainstream media sells at least one of three things 1. Capitalism, 2. Democrats, 3. Republicans (generally selling either 1+2 or 1+3 as a package). “Yumm, Bourgeoisie capitalism; tastes like liberty!” 😀 All half-joking aside, the thing to note here is that selling Bourgeoisie capitalism via “the mainstream media,” and generally selling other Americanisms is only one of many things happening in the world today. All states essentially sell their Civil Religion (ours being one of liberty, equality, and capitalism in a two-party system). This is normal. What we want to be on guard against is buying the cart with the horse (for example, buying into foreign propaganda aimed against one of the two parties out of an affinity for the other party, or buying into a plank-like prohibition out of party loyalty).

NOAM CHOMSKY – The 5 Filters of the Mass Media Machine.Propaganda techniques describe the specific tactics used to manipulate public opinion via propaganda. For example, name-calling, appeals to authority, exploiting emotions, presenting conflicting theories to confuse the public (we cover a long list of these techniques below).

TIP: Alex Jones, like Mort Downey Jr. before him does half info/half entertainment sort of talking head type thing. One tactic this brand of talking head uses is that they use emotion (anger in the case of Jones and Downey Jr.) to get you connecting with them. Once you start to mirror their anger, then at that point they start to indoctrinate you with strange views. Ever notice how Democrat is a dirty word in some circles? One tactic propagandists use is that they anchor negative emotions to a word! Democracy and liberty aren’t dirty words, and neither are equality and republicanism, yet ongoing battles of information have colored these words in odd ways (and this is what we want to guard against). This is to say, just because our first point focused on Mass Media doesn’t mean Alt-Media is getting a pass. Everyone has an angle, this page is going to teach you how to spot it.

Ron Paul acts like a nut on the Morton Downey jr show 1988. The time is 1988, its the end of the Reagan era and people like Jones, Stone, Trump, Roger Ailes, and more are catching on to this new hip fad. What is it? Why, it’s taking that whole TV President thing to the next level and selling anger in this mash-up of talkshow/news format. What could go wrong? Next up, the Monica and Clinton years followed by the Daily Show’s response to operation Shock and Awe followed by different flavors of the promise of hope and change. All these are examples of modern propaganda, we report, you decide if it is the good or bad type.Propaganda strategies describe complex sets of tactics that are used to manipulate public opinion. For example, “Oh, dearism” is a propaganda strategy that uses a number of propaganda techniques to sow confusion in the public and get them to feel like positive action is hopeless, that the world’s problems are hopelessly complex, and that the answer is found in fringe factions.

Adam Curtis – Oh Dearism.TIP: one could refer to propaganda techniques and strategies as “propaganda tactics.” All just English language names for the same general thing.

Indoctrination: Indoctrination is the process of influencing a person with ideas and attitudes (AKA brainwashing). In other words, it is getting someone to accept a “doctrine” as their own ideology. This can be as simple and positive as a family, country, and school socializing young people, or it can take a more insidious form (as it does with some of the more negative forms of propaganda). The purpose of propaganda is to indoctrinate someone with a viewpoint, how that is done and what viewpoint a person is indoctrinated with, and the intentions behind the indoctrination matter in terms of judging the morality of the indoctrination.

The Brainwashing of My Dad Trailer. If you get what Fox News does, then you can get the gist of what the left-wing does. When a talking point is passed around and repeated ad nauseam, and when that point is meant to elicit strong emotions, thereby anchoring the emotion to the talking point… it is most certainly a type of propaganda. The mainstream media contains lots of pure and real information, after-all they have standards even in this post-fairness doctrine world (like the equal time rule)… but there is a bit more going on there. When information conveyed via media is biased, and when the point is to influence and not just to inform, the news can act as propaganda that leads to indoctrination rather than unbiased information that leads to being informed.Deprogramming (“reeducation”): The process of un-indoctrinating the indoctrinated. When refugees flee to South Korea from North Korea, they may be deprogrammed from North Korean propaganda and taught how to live in free society in The Settlement Support Center for North Korean Refugees (Hanawon). The process can take months. Why? Because people have really strong cognitive filters and biases that make them resistant to change. Propaganda notably uses emotions and shady tactics to get around these filters (in other words, indoctrinating people is arguably easier than deprogramming).

The Defectors – Escapees From North Korea’s Prison Camps.FACT: Edward Bernays, nephew of Sigmund Freud, can be considered the father of public relations and propaganda. Bernays literally wrote the book on Propaganda, public relations, and manipulating public opinion. His work, rather unfortunately, inspired Joseph Goebbels propaganda master of the NAZI party.

HOW TO CONTROL WHAT PEOPLE DO | PROPAGANDA BY EDWARD BERNAYS | ANIMATED BOOK REVIEW.“THE conscious and intelligent manipulation of the organized habits and opinions of the masses is an important element in democratic society. Those who manipulate this unseen mechanism of society constitute an invisible government which is the true ruling power of our country.” – Bernays

“If you tell a lie big enough and keep repeating it, people will eventually come to believe it. The lie can be maintained only for such time as the State can shield the people from the political, economic and/or military consequences of the lie. It thus becomes vitally important for the State to use all of its powers to repress dissent, for the truth is the mortal enemy of the lie, and thus by extension, the truth is the greatest enemy of the State.” – Goebbels on “the Big Lie” (See tactics below for “the big lie” tactic).

Propagandists are those that spread propaganda. Here it is important to note that one who trolls or spreads propaganda is either doing so knowingly or not. This is to say, they are either spreading information that believe to be true, spreading information they know is false, or are walking some line in-between these things (such as one who is trolling or propagandizing, but believes their ends justify their means). When dealing with others we should assume the best. Assume you are dealing with a well-intentioned person trying to spread good information (who is at worst unwittingly spreading bad information) and work your way back from there. Confronting the world with positivity rather than paranoia is a good first step. No, you’ll never change the mind of a bot, but you may influence others viewing the thread or, you never know, could influence the entity behind the bot. Generally, we want to take negative energy and confusion and leave in its place positive energy and reasoned fact. Consequently, the general frame with which we approach the world matters.

TIP: Again, not all propaganda is bad. Self-help is essentially propaganda and involves self-indoctrination. A classical example of this sort of white-hat propaganda (or book of influence techniques in this case) is Dale Carnegie’s classic How to Win Friends and Influence People.

Types of Propaganda, Techniques, Tactics, and Strategies

Below is a list of types of the types of truth, then some key propaganda, techniques, tactics, and strategies, then a long list of propaganda tactics in alphabetical order.

These lists, especially the last long list, will mirror lists found on Wikipedia and Daily Writing Tips (not because I desire to copy their lists, but because we are trying to provide a comprehensive list).

To add value to the conversation, our list will expand on those lists, will tie back into our pages on the basics of logic, reason, argument, and truth (thereby giving you a counter-argument technique for every strategy used), and will offer extra techniques and strategies and unique descriptions of methods.

In other words, not only is this list “much bigger, trust me, it’s a great list” but also it comes with a handy guide as to how to combat propaganda tactics.

TIP: We can say a propagandist is one who uses criminal virtue (the black-hat/grey-hat version of virtue). One can use grey-hat and black-hat tactics to combat criminal virtue, but “going low” is rarely the right choice. It is better to stay white-hat and just be that much more clever, it avoids the tables being turned on you and you finding yourself on weak footing. That said, be aware of our “bed of nails” theory.

- Facts: Statements that are logically true.

- Truth (Real Information): A collection of statements that present logically true information in a way that seeks to inform and not mislead.

- Opinion: A type of information that seeks to convey thoughts.

- Belief: A type of opinion someone believes to be true, either justified or not.

- Counterfeit information: Information that isn’t truth, facts, or clearly stated opinion/belief.

- Lies: Statements that are logically false, contain falsehoods, or are generally fallacious (usually intentional, as the term generally implies “an intentional lie”).

- Half-Truths: A mix of real and fake information that uses a mix of correct logic, fallacious logic, lies, and truths. Otherwise known as BS. Most propaganda is BS.

- Disinformation: A type of counterfeit information that uses deliberate lies meant to mislead, including hoaxes.

- Misinformation: A type of counterfeit information that uses false or inaccurate information. Can range from that which is deliberately intended to deceive to honest mistakes. There is some overlap between disinformation and misinformation, but generally, disinformation seeks to disinform (spread lies) and misinformation seeks to misinform (spread half-truths and confuse)

- Rhetoric: Using any form of information to persuade people with words.

- Propaganda: Using any form of information to persuade people using any medium, especially counterfeit information.

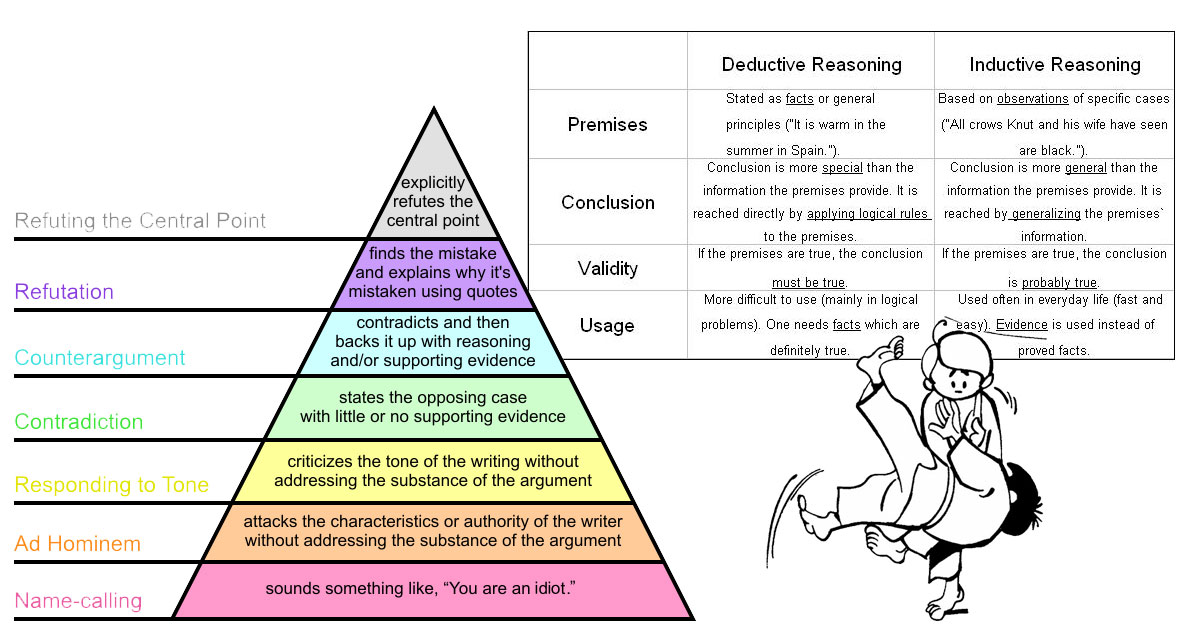

TIP: In logic and reason, deductive reasoning uses certainty and inductive reasoning uses probability. Only things that logically follow from one another are certain conclusions. All other conclusions are probable. Fallacious reasoning is reasoning that doesn’t follow the rules and standards of reasoning. A logic 101 course will give you most of the tools you need to spot propaganda (as a lot of propaganda is based on arguing logical fallacies). There are few things as satisfying as explaining to someone why their argument is unsound and invalid (or uncogent and weak), consider learning about the basics of logic and reason!

Some terms applying to logic and reasoning showing how reasoned arguments work.

Some core propaganda tactics:

- Anchoring: Connecting two things that don’t connect naturally. Such as anchoring a negative emotion to a term via name-calling. Or conversely, transferring positive qualities from one entity to another by associating them.

- Appeal: Propagandists will always try to appeal to core human traits, compassion, disgust, the need to feel a part of something, dissatisfaction and depression, the need for direction etc.

- Black & White: Propagandists will often offer an A or B choice (when not trying to sow confusion). If someone offers you two choices, A) Brand X is ideal and always good, and B) Everything brand Y does is “less than” X… you are likely dealing with propaganda. The world is full of complex grey areas, not black or white choices. Example, “all taxation is theft – period.”

- Confusion: Propagandists will often try to confuse an issue. Generally, they will confuse all issues except their own, and then on their own issue, they offer a simple answer. However, in the case of cover-ups, they will spread tons of misinformation, disinformation, and fodder for conspiracy theories to confuse the issue.

- Deflection: Propagandists will always deflect, they always want you discussing their issues from their frame, and will always pull you away from your core point and your frame (often with an Whataboutism). For example, you are talking about Single Payer in America and tax dollars, they’ll say “what about the military-industrial complex with tax dollars in the Middle-East,” or “What about the Affordable Care Act’s individual mandate.”

- Doubt: Propagandists like to cast doubt. They say, “but there is no such thing as objective truth.” Well, that sounds catchy, but actually, that is a load of BS. Computers and math work, there is lots of objective truth. The whole art/science of logic and reason is a study in finding objective truth… this page is a part of a series on the nature of truth.

- Emotion: Propagandists will almost always use emotion instead of facts. They don’t want to persuade you with facts, they want to anchor ideas opposed to theirs with negative emotion, and anchor ideas aligned with theirs to positive emotion. They don’t want to use logical truths to get you to change your mind, they want to get you emotional and indoctrinate you while your defenses are down.

- Fallacy (Fallacious Reasoning): Propagandists love fallacious reasoning. If proper reasoning methods aren’t being used, it is a big red flag you are dealing with propaganda. The most common type is connecting dots that don’t connect, “Hillary email’s” (anger and doubt) + “conflict in the Middle East” (anger and hopelessness) = “X other nation is your true ally and you can’t trust the United States” (presenting a new identity, a new hope, and a new purpose).

- Framing and Re-framing: Controlling the perspective by which something is viewed and controlling which facts are presented in what context (cherry picking).

- Identity politics: Humans have cognitive biases like confirmation bias, in-group favoritism, and “filter bubbles.” A propagandist tries to color bubbles using propaganda, and meanwhile, we (the anti-propagandist) want to combat this by giving people the tools to build better filters. A propagandist will say “everything that disagrees with me is fake news”… we simply want to teach people logic and reason so they can spot truths, lies, and half-truths for themselves. If a person knows logic, then they know how to spot real information. If a person knows what real information looks like and what biases humans are subject to, they will know when they are being sold half-truths and emotion meant to exploit their biases.

TIP: Many propaganda techniques can be said to mirror formal and informal fallacies. See a list of fallacies. I can’t stress it enough, understanding the difference between proper deductive and inductive reasoning and fallacious reasoning are the big key to understanding propaganda.

TIP: The right-wing has a number of “republican strategists” that stick out when discussing propaganda, one is Lee Atwater, another is Roger Ailes, but perhaps the most relevant and interesting today is Roger Stone. Roger Stone was one of the strategists behind Donald Trump, both in the Gore vs. Bush election and in 2016, and he was a central figure in the Clinton years (he is one of the reasons why the anti-Hillary message came back so hard in 2016). He was also one of the people who knowingly pushed the false narrative about Obama’s birth certificate. This is the sort of grey-hat and common propaganda we have been dealing with for decades now in America (since the post-WWII era of TV presidents, but under the media deregulation since the 80’s especially). Of course, there are left-wing equivalents (I won’t spin this and tell you there are not), and surely there are companies and parts of government who put out their own talking points (especially companies now that we have even more deregulation like Citizens United), but two-or-more wrongs don’t make a right! When we add to this the reality that the Russians seemed to take a notable part in the game of influence in 2016, it starts to point us to this idea that we really need to understand and be able to defend against divisive propaganda in our daily lives.

Get Me Roger Stone | Official Trailer [HD] | Netflix.TIP: Emotions that can and will be used against you via propaganda include: Affection Anger Angst Anguish Annoyance Anticipation Anxiety Apathy Arousal Awe Boredom Confidence Contempt Contentment Courage Curiosity Depression Desire Despair Disappointment Disgust Distrust Ecstasy Embarrassment Empathy Envy Euphoria Fear Frustration Gratitude Grief Guilt Happiness Hatred Hope Horror Hostility Humiliation Interest Jealousy Joy Loneliness Love Lust Outrage Panic Passion Pity Pleasure Pride Rage Regret Remorse Resentment Sadness Saudade Schadenfreude Self-confidence Shame Shock Shyness Sorrow Suffering Surprise Trust Wonder Worry.

Some general propaganda strategies and terms worth pointing out in their own section:

- Emotion politics: Emotion is one of the keys here. Watch out for your emotions getting the best of you (called “being triggered”) and watch out for your emotions being exploited. First, you start mirroring the emotions of a talking head, then you start connecting with them, and then next thing you know you are parroting some slogan you heard them say thinking of it like your own and even defending it (insidious, right!?).

- Fire Panic! A traumatic event is used to make broad sweeping changes. Beware the fire panic, it is always followed by a Night of the Long Knives and the loss of basic rights!

- Oh, Dear: A tactic where confusion is sowed in avant-garde style to undermine peoples’ perceptions of the world, so they never know what is really happening.

- Name-calling: Name-calling is easy and used often to discredit people. A propagandist will seek to associate the opposition with something negative to dismiss their viewpoints.

- Red herring: Presenting data or issues that, while compelling, are irrelevant to the argument at hand, and then claiming that it validates the argument.

- Troll Armies: For real, there are people/groups that control what we can call “troll armies” (that is bots and people who troll online). These trolls and troll armies go online and try to divide the public and spread propaganda. It is a thing, it is a problem, and it isn’t just Russia doing it (but it is also, in official U.S. opinion at least, also Russia). Be on guard against this; doesn’t matter if it is a right-winger from some forum, a left-winger from another one, a wealthy and ideological American hiding behind the shadows, or a robot from Russia with love, the end result (the spreading of propaganda and its manipulative effect) is what we want to be on guard against.

- Useful Idiot: A person used as an unwitting pawn. Often a person who spreads propaganda for a cause that isn’t their own. If you repeat a talking point or spread a meme that was planted by a cause that isn’t your own, this is not good.

- The Idea that the Enemy of Your Enemy is Friend: Hillary wasn’t perfect, so Putin must be your best friend… no, that is not how logic works. Take a step back and check yourself. That is a logical fallacy.

- Whataboutism: A tactic where the propagandist goes, “but what about this other thing.” One of the most common tactics. It is often used in conjunction with the red herring.

TIP: A conspiracy theory is generally actually a speculative hypothesis. Sometimes two data points may seem to connect, but that doesn’t mean they do. This is the root of conspiracy theories (which are generally actually conspiratorial speculative hypotheses). Don’t confuse speculation with fact and if you speculate, state certainty, specifics, and nuances. For example, we wouldn’t say “Russia hacked the election” we would say “according to official declassified documents, specifically the January report, Background to Assessing Russian Activities and Intentions in Recent US Elections: (a document produced by select members of the CIA, FBI, and NSA under the Office of the Director of National Intelligence): There is a high degree of certainty that Russia ordered an influence campaign in 2016 to undermine public faith in the US democratic process and the sway the election that included: the use of troll bots and trolls on social media, hacks (including DNC hacks; as we later learned, with fake documents inserted), leaks to sources like Wikileaks, targeted messaging on Russian state media like RT, and more.” Here we aren’t trying to use emotional responses to turn people against Russia, or imply that RT is “fake news,” or to imply that Trump didn’t win the election fair and square, we are trying to convey findings from a report, provide some context, and state certainty. The difference between “Russia hacked the election, that is why Trump won” and “according to the intelligence report it was highly likely that Russia interfered to hurt Clinton, but it doesn’t speak to whether or not this effort did in fact influence the election (and certainly it did not insinuate Trump colluded or didn’t win fairly)” is the difference between presenting counterfeit information and propaganda and presenting real information meant to inform on a difficult subject with no simple A/B answer or slogan.

TIP: When we talk about propaganda, we often focus on NAZI Germany, Russia, or the West… which is because, you know, those are the entities who came up with most of the worlds propaganda techniques. Psychology and marketing are neutral fields of social science… some of that field has got weaponized more than a few times from WWI to today. That is the reality of the world we live in. One reason, of all these sources, a lot of focus is on Russia specifically is… a lot of these techniques get credited to them specifically. Simple as that. As I note above, we are going to switch between NAZI Germany, Russia, and the West, left, right, and center. With that in mind, the section below is focused on Russia (for the reasons stated above).

A guide to Russian propaganda. Part 1: propaganda prepares Russia for war. A guide to Russian propaganda. Part 2: Whataboutism. A guide to Russian propaganda. Part 3: Rapid fire conspiracy theories. Russia’s Online Troll Army Is Huge, Hilarious & Already Everywhere. The Sunday Show – Uncovering Russian Propaganda With Former ‘Russia Today’ Anchor.TIP: If you see comments online that are off-subject (sowing confusion or deflecting) and are focused on negative emotion over fact… there is a chance you are dealing with an online troll or someone indoctrinated by propaganda. In all cases, you’ll probably want to employ some counter-argument tactics.

Some general propaganda counter-argument tactics include:

- Call them out: Call out propaganda strategies by name, explaining the tactic to the audience. Example, “quit this whataboutism; the focus is on X, our subject, not on this unconnected Y business. What about Y?! Y has nothing to do with this subject, stop avoiding the issue with logical fallacies, it makes you seem like you are spreading propaganda?”

- Connect: We are all human, thus anyone who is even somewhat legit will have common ground with you. Find it and connect over it. It is hard to have an enemy with who you share some planks and platforms with. Your information doesn’t require you to have enemies, their propaganda does often require demonizing you. You win when you connect.

- Inform: Spread the facts and stay on issue. If they want to focus on propaganda, dismiss rather than debunk and use your time and energy to spread facts. Make them waste their energy trying to weave tales, you focus your energy on fact-checkable facts and spreading awareness of real related issues. Bernie Sanders is a master of this.

- Keep calm: Putin’s face got red maybe once or twice in his life, but most of the time he has a cool smile. The propagandist is trying to use emotion, don’t let them get you emotional. Keep a cool head and stick to your message. It is exactly like dealing with children, generally, keep your cool, and show emotion tactfully.

- Don’t be a saint (you likely aren’t one): Humans are human, a good humanist respects the grey areas of life. You aren’t the Pope, don’t try to be all pious, virtuous, and saintly. Being the human you are and admitting that you could be wrong and have faults is a stronger position than not. Those who are always good can be taken down with a simple smear campaign or a little blackmail.

- Don’t play their game (be the “DM“, not the pawn): You can toy with a propagandist, you can use emotional counters, but let them take the lead and play their game. In other words, counter, but don’t get sucked into their world. You can either lead the conversation, or you have a side conversation, never let them lead the conversation.

TIP: In simple terms, we win against propagandists by sticking to the facts. If we have a stronger platform (a platform of correctness, logic, reason, etc) then no amount of negative propaganda can take the century. If you are writing code, you can’t say 1+2=6 and expect your program to work. The tricksters may win the day, but they tarnish their long-term reputation as the world becomes more informed and educated. Your duty is to help them tarnish their reputation and to help educate others.

TIP: Some propaganda tactics make sense in polite society. An ad for a new video game, a friendly and catchy name for a great policy, a little name-calling to denote negative influencers. One has to get their hands dirty sometimes, but that isn’t an excuse to embrace the darker side of criminal virtue.

NOTES: Some of the “big list” below is borrowed from Wikipedia and Daily Writing Tips at the moment while I better collect my thoughts (really want that to be clear while it still clearly mimics their lists). Will keep the citations moving forward, but forgive the few copy and pastes not specifically cited below (I am citing them here generally).

The Long List of Propaganda Tactics

Below is a long list of propaganda tactics collected from the internet or relayed by me (from memory, or because I coined a general social tactic), some overlap with each other. The idea is to create an exhaustive list over time, even if that means over-lap. Feel free to contribute any tactics below by commenting.

TIP: Remember, in ways, every formal and informal fallacy is essentially also a propaganda technique. See a list of fallacies. Also, the goal is to manipulate cognitive bias. So, see a list of cognitive biases too. Also, this is all generally “social psychology tactics,” so one can look to things like “gaining techniques” and the psychology of compliance in general.

Ad hominem in general (deflection): Focusing an attack on the opposition rather than on the argument or issue. Counter: Bring the focus back to the issue, reiterate the key point, point out their focus on opponents rather than issues. TIP: Ad hominem attacks aren’t useless, in some instances, questions of personal conduct, character, motives, etc., are legitimate and relevant to the issue. Still, generally speaking, an attack on a person speaks little to the facts behind an argument or the argument being made.[5]

Ad hominem tu quoque (meaning “You also”): This technique is when you respond to your opponent by accusing them of acting in a way that is inconsistent with the argument. For example, a father may tell his son not to start smoking as he will regret it when he is older, and the son may point out that his father is or was a smoker. That is a good point, but it doesn’t change the facts.

Ad hominem circumstantial (Bulverism): A technique that combines a genetic fallacy with circular reasoning. A tactic where one assumes that their opponent is wrong, and explains their error. Of course, explaining why a person made an error (that they did or didn’t actually make) is completely irrelevant. FACT: C. S. Lewis coined the term Bulverism.

Ad hominem guilt by association: A technique that associates a person with another person who made the argument.

Ad nauseam: The repeating of a slogan over and over again. When repeated enough a slogan will begin to be accepted as true. If we keep hearing “pro-life” instead of “anti-choice” or “taxation is theft” instead of “paying our fair share” or “gay marriage” instead of “marriage equality” it can become true. All these phrases mean the same thing, but the emotional impact is different. A propagandist will find a simple emotional slogan and will propagandize it “Ad nauseam.” Counter: Always use a better slogan… this is one of those fight fire with fire things. Saying “marriage equality” instead of “gay marriage” is still white hat.

Appeal to authority: Humans obey authority on average. As children we look to parents, then we look to teachers, then we look to peers, then we look to the state, etc. We naturally obey authority figures (and perceived authority figures such as celebrities). Propagandists know this and they will always try to sell a strong man and sell admirable qualities to sell a brand. Counter: You could always try bringing up the American Revolution, liberty, the bill of rights, individualism, etc. Someone wants you to obey authority, so make them agree on a time when obeying authority went against their own values.

Appeal to fear: Fear is a basic human emotion common on both the left and right. The media spends a lot of time exploiting fears, propagandists do too. Our anxieties and concerns about the future are used as emotional exploits to sell ideology. Counter: Fear is countered with love and understanding. They say fear X, you say, “I get it, I feel that way sometimes too, but we are all humans and we all share the same fears… we can connect over that.”

Appeal to prejudice: Humans naturally favor their in-group and fear their out-group. It is easy to exploit the many results of this basic human attribute. Exploiting the nature fear of “others” and the desire to belong to a group is a favorite tactic of propagandists and authoritarians. Counter: Look for other connections, despite identity politics, at the core we all share human traits. “We all bleed the same blood.” Also, point out the tactic and specifically focus on how this is being used to distract from more important issues.

Appeal to the stone (argumentum ad lapidem): A tactic where one dismisses a claim as absurd without demonstrating proof for its absurdity. It is like a fallacious version of reasoning by reduction (using facts to show a conclusion is absurd).

Artificial Dichotomy: A type of black and white A/B choice. The difference here is that this not only when someone tries to claim there are only two sides to an issue, but when they imply that both sides must have equal presentation in order to be evaluated. A classic example is the “intelligent design” versus “evolution” controversy, another example is “climate change denial” versus “climate change science.” Just because there are two general choices doesn’t mean there are only two positions to take and it hardly means both sides are equal. Counter: Point out the many other choices and point out that the sides should both be considered (but aren’t inherently equal).

Audio tactics: Using sounds to depress or excite people and to generally manipulate behavior. Everything from national anthems to catchy jingles, to distortions.

Bandwagon: Humans naturally desire to be part of a group. This tactic exploits the desire to conform and be part of a group by encouraging adherence to or acceptance of specific planks. Counter: A group based on being against another group is negative. People also desire not to have a life filled with negative energy. Help guide the person toward a broader group based on positive emotions (giving them a replacement group). Do you want to be part of the anti-X type group (focused on bad vibes), or do you want to be part of the coalition of all-types who share Y values (focused on good vibes)?

Beautiful people: Associating brands with people who have attractive attributes (like beauty, fame, strength, or wealth).

Big Lie: Using a complex array of events to justify an action or narrative. What you do is take a carefully selected collection of truths, lies, and half-truths that all seem to tell a story (which is actually revised history) and use them to construct a story that eventually supplants the public’s accurate perception of the underlying events. For example, one could tell the story of the rise of fascism in WWI focusing only on how “our heroes” fought the oppressive liberal order, to justify the rise of fascism in WWII. Or, in this same vein (as Wikipedia offers for an example), After World War I the German stab in the back explanation of the cause of their defeat became a justification for Nazi re-militarization and revanchism.

Black-and-white fallacy, or giving the illusion of an A/B choice: A type of logical fallacy (which is the main thing that the art/science of logic and reason teaches you how to spot and combat). In this case, it is giving people an A/B choice where A is presented as “very bad” and B as “very good.” You either love liberty like a real patriot, or you support those evil trade unions… it is an A/B Choice.” Counter: It isn’t an A/B choice, point out why it isn’t and why supporting B is actually the stance with good qualities that they have falsely attributed to A.

Cause and Effect Mis-match: Connecting a cause and effect relationship that isn’t there (a type of logical fallacy). Generally, systems and their relations are complex. A fast food place replaces their workers with machines, a pro-market blog immediately decides the cause was minimum wage hikes. Counter: Point out other potential causal factors and point out that cause and effect relationships are rarely that simple.

Cherry-picking, out-of-context, distortion of data, card stacking, selective truth: Picking and choosing which facts you present and how you frame them. As Wikipedia says well: Richard Crossman, the British Deputy Director of Psychological Warfare Division (PWD) for the Supreme Headquarters Allied Expeditionary Force (SHAEF) during the Second World War said, “In propaganda truth pays… It is a complete delusion to think of the brilliant propagandist as being a professional liar. The brilliant propagandist is the man who tells the truth, or that selection of the truth which is requisite for his purpose, and tells it in such a way that the recipient does not think he is receiving any propaganda… […] The art of propaganda is not telling lies, but rather selecting the truth you require and giving it mixed up with some truths the audience wants to hear.” In other words, propaganda is the art of selective truth-telling (AKA a popular favor of BS).

Classical conditioning: Anchoring one idea or stimulus to another (for example, using emotion to connect A and B, even when A and B don’t connect logically). Like Pavlov did to the dog. Counter: Anchor positive emotions to those ideas and stimulus (take back the term) and/or use different terms (anchoring positive emotions to the new term and not using the old term anymore).

Cognitive dissonance: Using a favorable stimulus to prompt acceptance of an unfavorable one, or producing an unfavorable association.

Color tactics: Red makes you feel rushed, yellow makes you want to buy something, some restaurants use these colors for that very reason (get you ordering up and out fast). We have 5 senses (plus), all those senses are on the table for exploitation along with every other human trait. Our neurology is wired for efficiency, we don’t always ask ourselves “why do I have this impulse” often we just run with our impulses (which isn’t always a good thing when our impulses are a result of manipulation).

Common man (or “plain folks”): The common man, the forgotten man, etc. It is when elites try to act like regular ol’ folks (mirroring their mannerisms, ideology, and policy stances) to try to connect with an audience that they wouldn’t otherwise connect with. Counter: Point out the ways in which they are nothing like the common man and the ways in which their policies will hurt the common man (if this is the case; it is often the case).

Cult of personality: The creating of a cult around a personality. John Wayne, Ronald Reagan, Obama, Trump, Hitler, Lenin, Castro, Justin Bieber. If there is a fan club around a person, that can be used to manipulate people. This isn’t a statement on the person, it is a statement on how the fan base can be exploited. “John Wayne loves voting Republican, you don’t want to offend the Duke, do ya’ Pilgrim?… be like the Duke, vote for Reagan and deregulation.” <— the messaging is generally more subtle, I’m trying to make points not present well-crafted propaganda.

Demonizing the enemy: Propagandists often seek to dehumanize and denigrate the opposition to sway opinion against them, anchor negative emotions to them, make them “the elite”, make them “out-group”, etc. This tactic is the tactic of children, it is also insanely effective. Counter: Seek to connect and show similarities. Well Ted, you said I was Satanic, but I go to church with your Mom. Haven’t seen you there recently, is there something you aren’t telling us? Are you trying to demonize and deflect because you haven’t gotten right with the church recently? Or, embrace the slander like Trump did with the “deplorable” comment or Hillary did with the “nasty woman” thing. There is no great answer here, the mud-slinging is effective and hard to deal with.

Old WWII propaganda trying to explain why populism isn’t ideal. Is the idea of labor and management doing it together a positive message? I would generally say yes. Propaganda like the above sells National pride and a positive message, but it also does this by demonizing other ideologies (rightly or wrongly).

Demoralization: Propaganda meant to weaken resolve, to erode fighting spirit, and encourage surrender or defection. Counter: Don’t get demoralized, use the opportunity to boost morale.

Dictate: A tactic that speaks to dictators. An appeal to authority technique that tries to simplify choices and present an idea or cause as the only viable alternative. “There is an A/B choice, A is correct and American, which one do you choose evil Communist B or amazing super patriot A like everyone else?”

Disinformation: A broad class of propaganda which is false information spread deliberately to deceive. Many of these other techniques are types of disinformation. Disinformation can involve the deletion of information from public records, in the purpose of making a false record of an event or the actions of a person or organization, including outright forgery of photographs, motion pictures, broadcasts, and sound recordings as well as printed documents. This is different from misinformation (which is simply incorrect information).

Dog Whistles: You can’t say X-word anymore, but you can still call em’ Y and Z and your base knows exactly what you mean. Counter: Call them out. “Y and Z? That sounds a lot like the X-word? What exactly do you mean by that? Are you implying that all polite version of the X-word are Y and Z?”

Door in the face: A barter tactic where you start by asking for more than you want then settle on what you wanted in the first place. The persuader attempts to convince the respondent to comply by making a large request that the respondent will most likely turn down, much like a metaphorical slamming of a door in the persuader’s face

Doublespeak: Say one thing. Imply one or more other things. A lot of propaganda involves doublespeak. One can double speak with body language, tone, writing, or any other communication method.

Euphemism: Another word for dog whistle.

Euphoria: The use of an event that generates euphoria or happiness, or using an appealing event to boost morale. Euphoria can be created by declaring a holiday, making luxury items available, or mounting a military parade with marching bands and patriotic messages. “Be a patriot, buy liberty bonds on the 4th of July, they have flags on them!”

False Association, or false analogy: Hitler liked art, therefore all artists are NAZIs. Just because some traits are shared between two systems doesn’t mean all attributes are shared. This can also involve exaggerating the extent to which a trait is shared. Hitler called the news fake right before WWII, that means WWIII is about to happen.

Fear, uncertainty, and doubt (FUD): disseminating false or negative information to undermine adherence to an undesirable belief or opinion

Flag-waving: Using nationalism or patriotism to sell an idea. A common thread here that speaks to in-groups and the desire for acceptance.

Foot in the door: A tactic where you “get your foot in the door” by getting some basic compliance and then pushing for more. A common technique of salespeople, “you bought the air freshener, your only one crushing debt away from that sports car!” A form of up-selling.

Framing and re-framing: Speaking loosely, everything depends on frame of reference. It’s all about framing an argument to help people see it from your viewpoint or re-framing an argument (controlling perspective). If I use rhetoric to drop you in X-tyrant’s shoes and start weaving a big lie, pretty soon you’ll be sympathizing with him (as I’ll make you see the human side of him and will ignore the tyrant side or spin it).

Gaslighting: Sowing seeds of doubt in a target individual or group, hoping to make them question their own memory, perception, sanity, and norms. Using persistent denial, misdirection, contradiction, and lying. Comes from an old movie called Gas Light where the main character is driven insane by this sort of BS. “Oh, you don’t like being oppressed and starting a Yellow Wallpaper all day… you must be going crazy and have hysteria, maybe shock therapy will work?”

Gish Gallop: Bombarding a political opponent with obnoxiously complex questions in rapid-fire during a debate to make the opponent appear to not know what they are talking about.

Glittering generalities: Emotionally appealing words that are applied to a product or idea, but don’t logically relate to that specific product or idea. “Do you feel sad sometimes? Try this drug.” (everyone feels sad sometimes, so anyone is bound to connect). This is used in cold reading where the aim is to make very broad ambiguous statements that apply to nearly everyone but sound personal and specific. This technique has also been referred to as the PT Barnum effect. “There’s a sucker born every minute”… don’t be a sucker (and further, we can all be suckers sometimes; one reason that line works because we can all relate to it).

Half-truth: A statement that is partly true or only part of the truth, or is otherwise deceptive. Half-truths are almost always used in propaganda. They are one of the main types of counterfeit information.

Hot Potato: An inflammatory (often untrue) statement or question used to throw an opponent off guard, or to embarrass them. The fact that it may be utterly untrue is essentially irrelevant, it brings controversy to the opponent and throws them off guard. Counter: Call them out for making stuff up, make a joke, say “why is that what you do in your free time,” or “so you are resorting to making things up, you should take a course in logic.” Or, less savory, if you know anything inflammatory about them it may be the time to bring it up.

Ignoratio elenchi, or ‘ignoring of a refutation’ (Irrelevant Conclusion AKA Missing the Point): An argument that fails to address the issue in question (be it valid or invalid).

Inevitable victory: A bandwagon technique. The assurance of uncommitted audience members, and reassurance of committed audience members, that an idea or cause will prevail. “They say we we aren’t going to win, but we are going to win, you know we are going to win, my fans, who are patriots by the way, know we are going to win, trust me, there will be so much winning, our in-group are going to win because we are winners and the other guys are losers, it’s us versus them, and you can see it already, we are winning, just follow my pocket watch, winning…” (every election in the US both sides have a tendency to do this; yet one side always loses).

Insinuation: A tactic where one uses the complexities of symbolic language to practice a form of doublespeak. Where one dances around what they mean and imply it without saying it directly (or without making it the main point of a subject).

Intentional Vagueness: The use of deliberately vague generalities that allow the audience may supply its own interpretations. “We are going to do this healthcare so good, it won’t be bad, it’s great healthcare, I think people will really love this healthcare, it is very great.” “Hope and Change” “Make America Great” these are intentionally vague statements that let people fill in the blanks and ascribe their own meaning.

Join the crowd: A bandwagon technique. Reinforces the idea that people should come join the winning crowd. “So much winning!”

Labeling or name-calling: Labeling can be positive or negative. The idea is to hurt or help a brand via labels. The lowest of the low brow is negative name-calling and labeling (like saying “you are an idiot” in an argument). This tactic uses name-calling to anchor negative labels and emotions to people thereby discrediting them with a single label. The tactic aims at the very bottom of the pyramid of reasoned counter-arguments (see Graham’s Hierarchy of Disagreement in the image below). Why? Because it is so darn effective. With a simple name one can dismiss everything a person says, or conversely, a positive name can lift up a person everything does. In more complex terms, any emotion or conation can be anchored to a term, and then a term can be anchored to a person. Operation “Shock and Awe” (where we all got to watch bombs fall on Iraq on live TV back in the Bush years), or calling someone a “Fascist” or “Communist,” or calling someone a “hero,” “patriot,” or “a real American,” these are only a few examples of how labels play a roll in propaganda and how we perceive something.

Lacing: Using truth and fact but lacing it with propaganda (or, conversely, lacing counterfeit information with truth and justified belief to make it seem more valid). This is a type of subtle sort of grey-area BS.

Latitudes of acceptance: Introducing an extreme point of view to encourage acceptance of a more moderate stance, or establishing a barely moderate stance and gradually shifting to an extreme position. Like when you start the barter with a high price and the barter down to the price you wanted in the first place. Congress does this all the time with policy, they put out something crazy and then barter their way back to something they were fine with as long as they got the tax breaks to go with it.

Lesser of Two Evils: Justifying a bad choice by painting another choice as also being a bad choice. X candidate is the “lesser-of-two-evils,” so you should vote for them (or you should do something else). The main problem here is that moral judgments aren’t facts and one thing being “more evil” doesn’t make the other choice good. Counter: Point out that evil is a moral judgment and that calling things “lesser of two evils” is name-calling (a type of propaganda).

Lying: Spreading false or distorted information that justifies an action or a belief and/or encourages acceptance of it.

Love bombing (Milieu control): Using peer or social pressure to engender adherence to an idea or cause; related to brainwashing and mind control. Used to recruit members to a cult or ideology by having a group of individuals cut off a person from their existing social support and replace it entirely with members of the group who deliberately bombard the person with affection in an attempt to isolate the person from their prior beliefs and value system. So “love” with a small “l”, more like crazy cult stuff you should run away from.

Managing the news (talking points): Staying on message, spreading the talking point, and using classical conditioning. The influencing of news media by timing messages to one’s advantage, reinterpreting controversial or unpopular actions or statements (also called spinning), or repeating insubstantial or inconsequential statements that ignore a problem (also called staying on message). According to Adolf Hitler, “The most brilliant propagandist technique will yield no success unless one fundamental principle is borne in mind constantly – it must confine itself to a few points and repeat them over and over.”

Misuse of Statistics and Research: Presenting a statistics or bit of research in a misleading way.

Non Sequitur: A type of logical fallacy, in which a conclusion is made out of an argument that does not justify it. All invalid arguments can be considered as special cases of non sequitur.

Obfuscation: Intentionally vague and ambiguous messaging, intended to confuse the audience as it seeks to interpret the message, or to use incomprehensibility to exclude a wider audience.

Operant conditioning: Indoctrination by presentation of attractive people expressing opinions or buying products. The idea that sex sells falls under this category.

Oversimplification: Offering generalities in response to complex questions.

Pensée unique (French for “single thought”): the repression of alternative viewpoints by simplistic arguments. For example, “Minimum Wage doesn’t work, it’s simple economics.”… (but like, is it? TIP: It is not).

Quotes out of context: The selective use of quotations to alter the speaker’s or writer’s intended meaning or statement of opinion. Like how people accuse Saul Alinsky of being Satanic because he used Lucifer as a literary device.

Rationalization (Making Excuses, not “Rationalism”): The use of generalities or euphemisms to justify actions or beliefs. Or, simply, using beliefs and opinions and logical fallacies to get someone to rationalize something that isn’t rational and isn’t backed up by empirical truth.

Red herring: Presenting data or issues that, while compelling, are irrelevant to the argument at hand, and then claiming that it validates the argument. This is a very popular technique used often.

Reductio ad Hitlerum (reducing everything to Hitler): A clever name for reducing everything back to one negative person or event in order to get people to dismiss the idea. The NAZIs had healthcare, therefore universal healthcare is fascist.

Repetition: The repeated use of a word, phrase, statement, or image to influence the audience. The Ingsoc slogan “Our new, happy life,” repeated on telescreens in 1984 is an example of this.

Scapegoating: Blaming a person or a group for a problem so that those responsible for it are assuaged of guilt and/or to distract the audience from the problem itself and the need to fix it.

Selective truth: restrictive use of data or facts to sway opinion that might not be swayed if all the data or facts were given.

Shifting the burden of proof (onus probandi): A technique where instead of proving a claim the other person has to prove it false. For example, a person claims millions of unlawfully present immigrants voted in the 2016 election, you say, “there is no proof of that” and they say, “oh year, prove it.”

Slippery slope fallacy: The idea that a shift toward one direction will lead to extremes. “If allow marriage equality, then people will marry their dogs.” This is a jump in logic that is a sort of logical fallacy of reasoning by analogy.

Sloganeering: The use of short and memorable phrases to encapsulate arguments or opinions on an emotional rather than a logical level.

Stalling and Ignoring the Question: A very common technique is to ignore questions (to avoid giving unpopular answers or specific answers) or to stall (to get more time to think). For example, talking heads will often dismiss the climate change debate with a line like “more research is needed.” Counter: Bring it back to subject, “with the research we do have, what do you think?” Or, point out, “you did not answer the question, specifically, give me a specific answer, what do you think about X.” If they won’t give an answer, say, “ok, you don’t want to address that question head on, that is your choice, you did say X, so we’ll just have to infer your stance based on that.”

Stereotyping: The incitement of prejudice by reducing a target group, such as a segment of society or people adhering to a certain religion, to a set of undesirable traits.

Straw man: The misrepresentation or distortion of an undesirable argument or opinion, or misidentifying an undesirable persona or an undesirable single person as representative of that belief, or oversimplifying the belief.

Testimonial: The publicizing of a statement by an expert, authority figure, or celebrity in support of an idea, cause, or product in order to prompt the audience to identify with the person and support the idea or cause or buy the product. “Not only am I the CEO, I’m also a client…” well that is distracting from the reality that you are like the most bias person toward the product. Nice spin.

Third-party: Use of a supposedly impartial person or group, such as a journalist or an expert, or a group falsely represented as a grassroots organization, to support an idea or cause or recommend a product. For example, “9 out of 10 dentists recommend this toothpaste.” Essentially a vague version of the testimonial.

Thought-terminating cliché: Use of a truism (or catchy phrase) to stifle dissent or validate faulty logic. “Well you say climate change data is undeniable, I think its a hoax by China, who knows? We’ll just have to agree to disagree. You know, everything is just a matter of perspective anyway.” The idea that agreeing to disagree and that everything is just a matter of perspective are little more than clever ways to end a discussion. Counter: Point out that agreeing to disagree adds nothing to the debate, it isn’t a fact, it is a “debate-terminating cliché.”

Transfer: The association of an entity’s positive or negative qualities with another entity to suggest that the latter entity embodies those qualities.

Unstated assumption: Another word for implying something but not saying it directly. A form of doublespeak.

Virtue words: Anchoring positive connotations to an idea, brand, or group. Can be used to create a more positive image of an idea, brand, or group or can speak to getting people to embrace an idea, brand, or group by making them think they share positive qualities with the group. For example, the positive propaganda poster below wants you to associate (transfer) the virtue of Jefferson and Jackson, with Democrats, with W.J. Bryan, and with yourself (to get you to vote for Bryan).

Weak Inference (or False Cause): When a judgment is made based on inconclusive evidence. Inductive reasoning draws conclusions from probabilities, if you have a few data points pointing at something it doesn’t make it true, in fact it doesn’t even make for a strong argument. A weak inference is when a weak argument (one that is not strong and doesn’t have lots of facts backing up its certainty) is presented as a strong argument.

Yes, but…: Using the phrase “yes, but…” to reframe the conversation. When someone says something, don’t disagree and thereby trigger a “no response,” instead re-frame and say “yes, but…”, then make your point, and then get them to start giving yes responses. It is a fairly shady trick used to build rapport. Watch out at saying “yes but…” to something that really isn’t a “yes.”

Yes/No Conditioning, eliciting a response, leading: Eliciting a response by asking questions you know a person will give an affirmative or negative response to. If you want someone to reject an idea, get them saying “no” and rejecting ideas. If you want them saying yes, get them saying “yes.” It is a version of the used car salesperson trick where they get you to agree to something small and then work you into something big. “Do you love liberty? Yes! Do you love your family? Yes! Are we going to vote yes on Prop 238? Yes?!… wait, what?” Counter: Don’t let someone else pace the conversation. Give a no answer to a yes question then reframe the conversation by using that break in flow as a pivot point.

CH

Helpful compilation. Of course, everybody has in the end to think for himself.

You state ‚ Whataboutism: A tactic where the propagandist goes, “but what about this other thing.” One of the most common tactics. It is often used in conjunction with the red herring.‘

I personally find it often very subjective or framed. Also in the respective Wikipedia-article, but I like there the ending section:

‚Defense [of whataboutism]

Some commentators have defended the usage of whataboutism and tu quoque in certain contexts. Whataboutism can provide necessary context into whether or not a particular line of critique is relevant or fair. In international relations, behavior that may be imperfect by international standards may be quite good for a given geopolitical neighborhood, and deserves to be recognized as such.

Christian Christensen, Professor of Journalism in Stockholm, argues that the accusation of whataboutism is itself a form of the tu quoque fallacy, as it dismisses criticisms of one’s own behavior to focus instead on the actions of another, thus creating a double standard. Those who use whataboutism are not necessarily engaging in an empty or cynical deflection of responsibility: whataboutism can be a useful tool to expose contradictions, double standards, and hypocrisy.

Others have criticized the usage of accusations of whataboutism by American news outlets, arguing that accusations of whataboutism have been used to simply deflect criticisms of human rights abuses perpetrated by the United States or its allies.[138] They argue that the usage of the term almost exclusively by American outlets is a double standard, and that moral accusations made by powerful countries are merely a pretext to punish their geopolitical rivals in the face of their own wrongdoing.

The scholars Kristen Ghodsee and Scott Sehon posit that mentioning the possible existence of victims of capitalism in popular discourse is often dismissed as “whataboutism”, which they describe as “a term implying that only atrocities perpetrated by communists merit attention.” They also argue that such accusations of “whataboutism” are invalid as the same arguments used against communism can also be used against capitalism.‘

The hard truth is, it‘s not so easy with the question what are facts etc. Even that question depends often on a frame of reference. (The source for the Whataboutism video on Russia: Euromaidan/ Ukraine).

Thomas DeMicheleThe Author

Yeah, that is like adding another layer on the propaganda pie by way of argument (counterarguments rooted in deflection, reframing, and skepticism where the goal is to say, I think, “whatabout whataboutism, as you, of course, do this thing too, therefore is this thing so bad, and if so, let’s look more at your thing, but if not, well our thing isn’t so bad as everyone does it, plus we are being picked on, etc etc”….). It isn’t that the argument isn’t valid, it is more that taking it down that rabbit hole is mind-numbing and weakens the core point and would have most people tune out… which I mean just makes it also good propaganda. Fun stuff to discuss 🙂

See this page on the types of arguments: http://factmyth.com/the-different-types-of-reasoning-methods-explained-and-compared/

Vromme

What is “Fake News”? – “Fake News,” Lies and Propaganda: How to Sort Fact from Fiction – Research Guides at University of Michigan Library

Mike Todd

Thanks for your work in compiling a very useful resource.

While it is natural that your own views will at the very least “seep through” in what you write, if you ever edit this piece, I would encourage you to consider including more specific examples of left-leaning propaganda, as you did in the entry on “cult of personality.” There is certainly no lack in this age of spin in which we live! It would take very little effort, for example, to dig up egregious examples of ad nauseum, name-calling, sloganeering, stereotyping, and reductio ad Hitlerum from every conceivable part of the political spectrum in 2020.

If I am right in choosing to assume that your purpose here is to shed light on propaganda rather than create it, then adding such examples would help you better accomplish your goal, as uncomfortable as it can be to pull back the curtain on manipulative tactics used by one’s own allies or in support of one’s own causes. In addition, making a concerted effort to avoid presenting “selective truth” would also increase the likelihood that people of all persuasions would find your work helpful and pass it on to others.

That said, please don’t let my quibbles overshadow my commendation; Heartfelt thanks for providing such a helpful summary!

Thomas DeMicheleThe Author

Thank you for the kind words. Any interest can use propaganda, and generally all do. So if I maybe pointed out the propaganda of one side more than an other as examples here, then I agree that I should add more equal weighting… as the article is meant to be on propaganda and not politics. Will keep this in mind during the next edit.

Rick Ryan

wow I could see that there was a set of tactics, and have done some research to see what is the red herring vs. a distraction vs. some other motive – excellent set of information. I will try to use it in context to identify if my theories for motives are true – great work

Thomas DeMicheleThe Author

Thanks for the compliment!

DK McCarty

Nice, however I detect a leftist slant… Yes, a “factual” article illustrating propaganda techniques containing progaganda…

Thomas DeMicheleThe Author

It is always hard to fully remove my own biases from everything I write. I try to approach everything from the center and with respect to my audience and my own biases… but I tend to have a liberal left / social left / capitalist / American bias. Especially in my older work it tends to be more pronounced. Glad you otherwise enjoyed the article. Could always do with another edit!

umtorab mohammed alhassan

thank you soooo much very helpful

Thomas DeMicheleThe Author

Wait… are you appealing to my inate human desire for positive feedback in order to further some agenda?

If so, it worked. Welcome to the comments section. 😀

Terrell

Hi. I am actually learning to master these and the 50 propaganda techniques for positive use to help women business owners. This is so great man 👨🏿. I love you for this. You write well by the way. Hey bruce lee said do everything 10,000. I wish me luck. Ill be the greatest propagandist of all time. Well, after you. So second. Edward bernay. Okay, third greatest of all time. Thanks to you. And when I say one of them 1,000 X’s straight it is like a world starts to form in my mind. Its exciting and scary at the same time.

Thomas DeMicheleThe Author

I’m glad to hear you are getting something out of this list. I find it fascinating as well. Have fun, find success, and keep it positive 🙂